The hype and the reality

In the early stages of my career, the loudest buzzword in tech was Big Data. Every company claimed to be sitting on oceans of information, and every vendor promised a magical way to turn it into gold. Much of the hype faded, but the shift in data technologies was real — it reshaped how enterprises built analytics and made decisions.

A decade later, the pattern is repeating — only louder. This time, the buzzword is AI adoption. Every boardroom is asking how to get copilots, apps, and agents into production. The hype is even greater than Big Data ever was. And once again, behind the noise there’s a genuine shift underway, one that could reshape enterprises.

But here’s the truth: without governance, this shift isn’t innovation — it’s liability. That’s why we need a new principle for this era: Governance-First AI.

The database badge system

The analogy I like to use is an office building with security badges. Traditional access control in databases worked the same way. Through IAM and RBAC, users were assigned broad roles — finance, HR, marketing — and those roles determined which “floors” of the database they could enter. Sometimes OAuth or SSO mediated the login, but the role always decided the access.

This model made sense: it was simple for administrators to manage, most employees were considered trusted insiders, and databases weren’t built to enforce row- or field-level rules at scale. The kinds of questions this model supported were straightforward: “What was last quarter’s revenue?” or “How many employees are in each department?” Dashboards and reports were built off these broad permissions. All valuable stuff.

But Governance-First AI demands more. Broad badges are not enough when AI systems, not just humans, are inside the building.

The shift to detail — offices and drawers

At first, the badge was enough to get you to the right floor. But soon, people wanted to open the actual offices and drawers inside — every row, every field of the data. That’s where the next level of value lives. Could an AI agent reconcile thousands of GL accounts? Could it scan transaction records to flag fraud? Could it give a portfolio manager just the right slice of data — and nothing more?

The infrastructure is ready. Modern cloud databases, vector stores, and real-time pipelines make this kind of fine-grained analysis fast and inexpensive.

The gap is governance. IAM and RBAC were never designed to decide which drawers you can open, which fields should be masked, or how to log every action for compliance. Enterprises fall back on clumsy workarounds: multiplying database views, inserting brittle proxies, or hard-coding filters into applications. None of these approaches provide consistent audit trails or satisfy frameworks like SOX, HIPAA, or GDPR.

Governance-First AI means setting policies at this level — deciding which drawers can be opened, which fields must be masked, and ensuring every access is auditable.

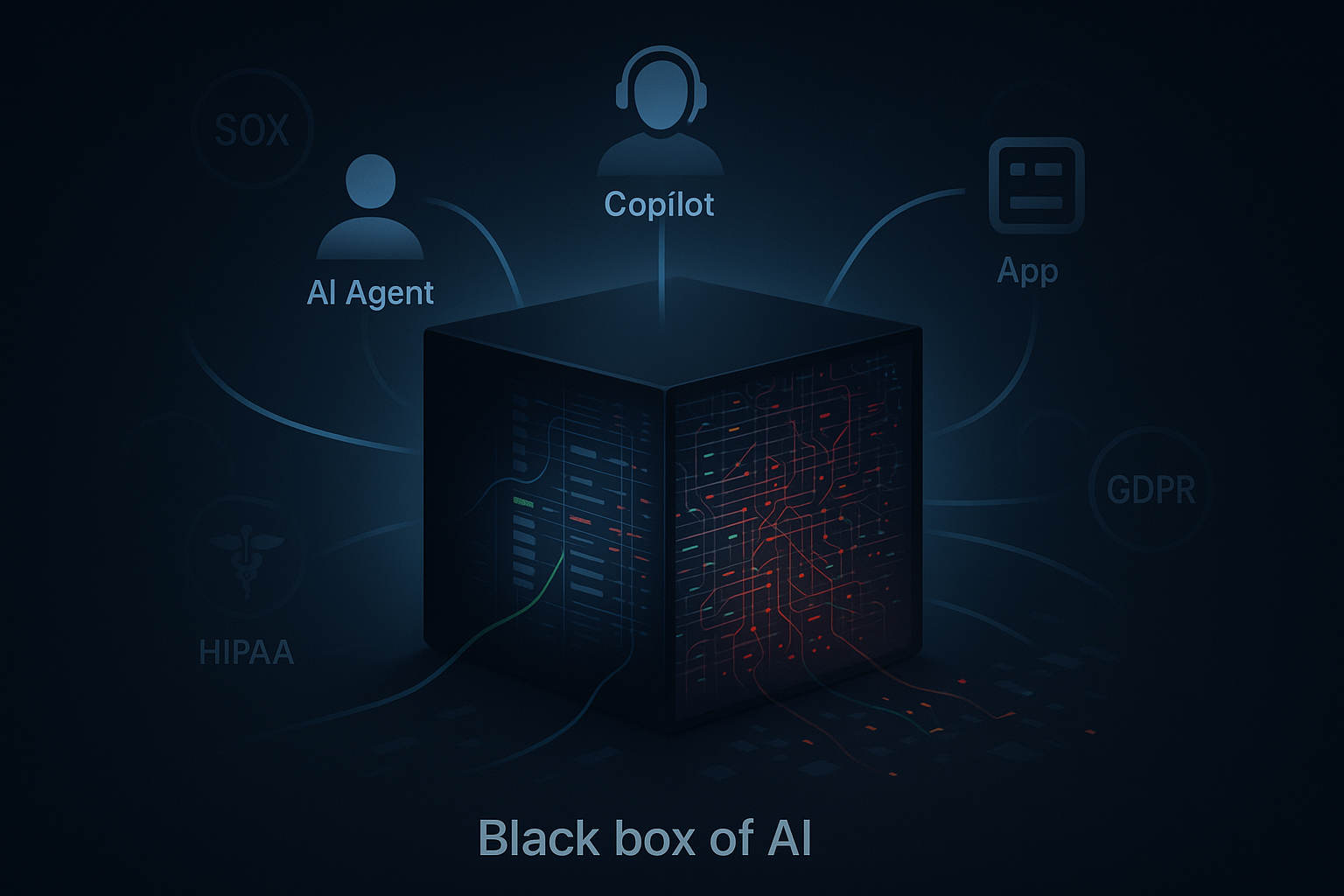

The activity inside — the black box of AI use

If the badge system controlled which floors you could enter, and the drawers represented the rows and fields, the next challenge is what actually happens inside those rooms. This is where AI adoption becomes a black box. It’s no longer just a few analysts running queries — it’s apps, copilots, team members, and autonomous agents all interacting with live data, often in ways that are difficult to see or control.

Every interaction matters. Which fields were touched? Was sensitive information masked? Did an app request data only for its intended user, or did it overreach? How many queries did a copilot generate in one session, and how were results reused downstream?

This activity layer grows fast. A single table row may be accessed hundreds of times across different workflows, creating millions of access events — far more than the size of the data itself.

Enterprises don’t just need to decide who can enter the building or which drawers they can open. They need Governance-First AI to bring real-time visibility and audit of every interaction inside. Without it, AI adoption at scale remains a black box — and therefore a liability.

Where’s the value?

The most important point is that there’s value at every level of access. Role-based controls (badges) gave enterprises a way to manage broad permissions. Row- and field-level access (drawers) unlocks richer use cases with databases. And activity-level visibility (the black box of AI use) ensures every query and interaction is auditable.

The problem is, enterprises have treated these as separate worlds. IAM and RBAC govern the badge system. Developers hack together views and filters for drawer-level access. Security teams try to bolt on logging for the activity layer. None of it connects.

The real value moving forward will be governance that spans all three. Imagine being able to join role, field, and activity data in one system: proving that a portfolio manager (role) queried only Canadian accounts (field) and that the copilot used in the workflow never touched restricted identifiers (activity). That’s what turns AI adoption from a black box into a system enterprises can trust.

And that’s exactly why we built LLMac — the governance layer designed around the principle of Governance-First AI, making adoption safe across every level.