Empower

Empower every team to adopt AI safely.

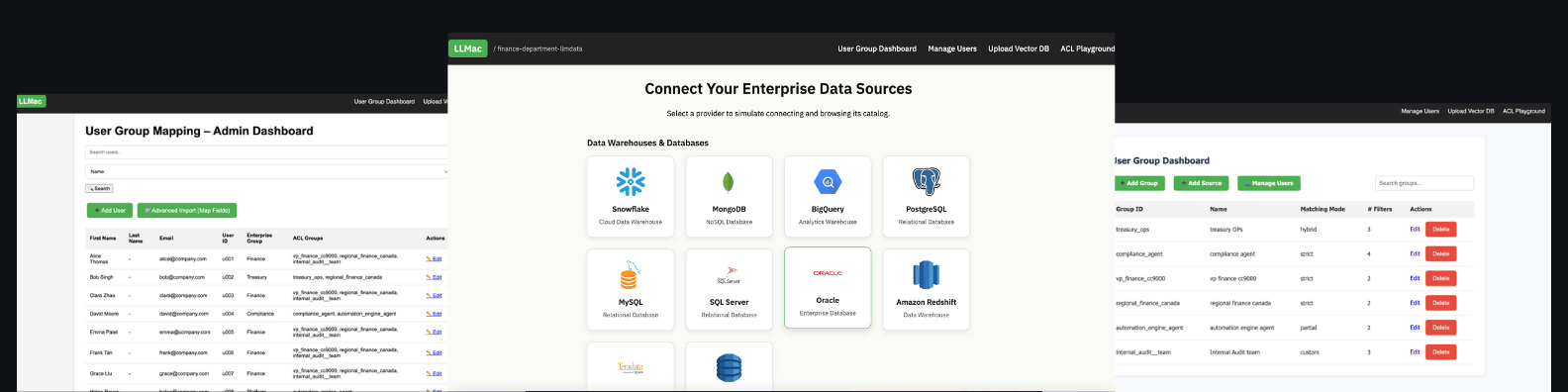

LLMac isn’t just about controlling access — it’s about enabling safe exploration. Your teams and AI agents can query, test, and collaborate with enterprise data knowing every result is compliant, filtered, and auditable. Exploration becomes fearless when governance is built-in.

Any app, any agent

LLMac works with your enterprise AI stack of choice — copilots, custom AI apps, or third-party LLMs. No matter the interface (web, desktop, or mobile), access controls remain consistent and enforceable.

Flexible reporting & sandboxing

Simulate AI queries in a safe sandbox before giving agents production access.Run policy tests on Finance, HR, or R&D datasets to validate outcomes.

Share queries and results directly across teams with full audit context.

Essential guardrails

Built-in protections: Prompt injection and RAG poisoning defenses. Row, column, and field-level filters at query time.Cross-database and multi-cloud enforcement so you always work with the full, trusted picture.

Collaborative by design

LLMac makes AI exploration a team sport. Policy files and access models are reusable across departments, and results can be validated and shared with colleagues or auditors — no hidden black box, just transparent governance.

Built for Developers

Developers can integrate LLMac directly into apps via the SDK, APIs, or policy JSON. Extend protections into any custom workflow or product, while maintaining consistency with enterprise access rules.